LangChain的快速指南

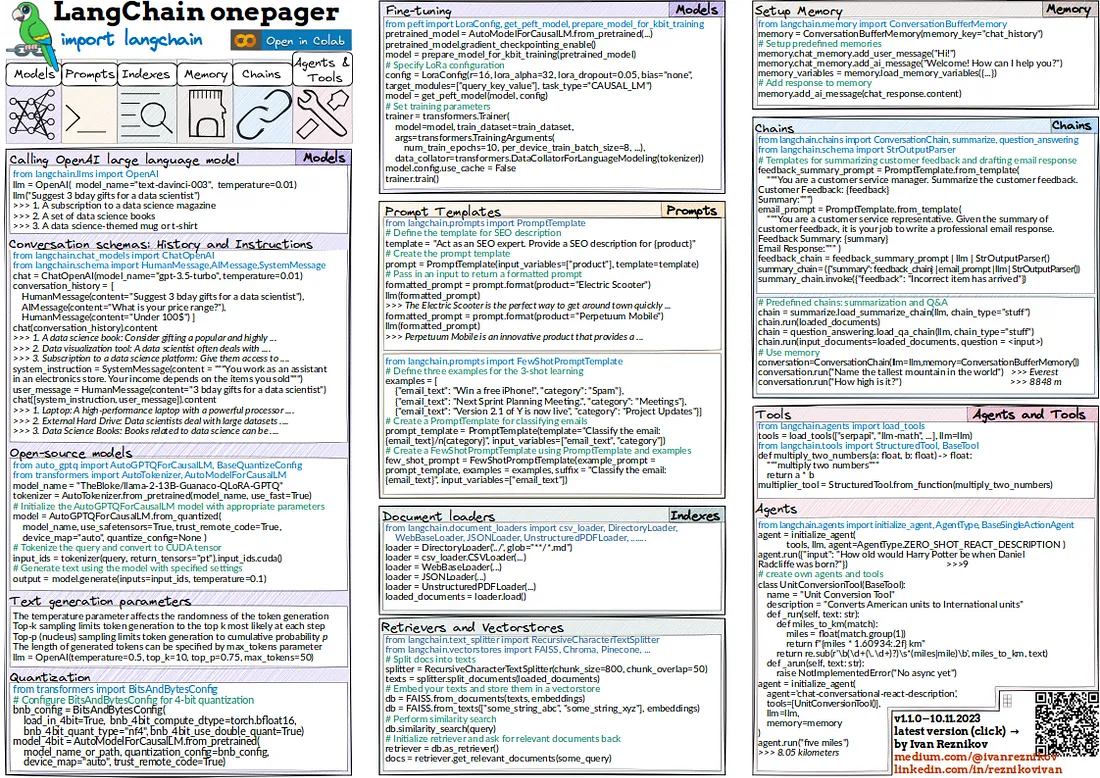

创建的onepager是我对LangChain基础知识的总结。在本文中,我将浏览部分代码并描述掌握 LangChain 所需的入门包。

楷模

LangChain中的模型是指任何语言模型,如OpenAI的text-davinci-003/gpt-3.5-turbo/4/4-turbo、LLAMA、FALCON等,可用于各种自然语言处理任务。

以下代码演示了在 LangChain 中初始化和使用语言模型(本例中为 OpenAI)。

from langchain.llms import OpenAI

llm = OpenAI(model_name="text-davinci-003", temperature=0.01)

print(llm("Suggest 3 bday gifts for a data scientist"))

>>>

1. A subscription to a data science magazine or journal

2. A set of data science books

3. A data science-themed mug or t-shirt

如你所见,我们初始化了一个LLM并用一个查询调用它。所有的令牌化和嵌入过程都在幕后发生。我们可以管理对话历史,并将系统指令纳入聊天中,以获得更多的响应灵活性。

from langchain.chat_models import ChatOpenAI

from langchain.schema import HumanMessage, AIMessage, SystemMessage

chat = ChatOpenAI(model_name="gpt-3.5-turbo", temperature=0.01)

conversation_history = [

HumanMessage(content="Suggest 3 bday gifts for a data scientist"),

AIMessage(content="What is your price range?"),

HumanMessage(content="Under 100$"),

]

print(chat(conversation_history).content)

>>>

1. A data science book: Consider gifting a popular and highly recommended book on data science, such as "Python for Data Analysis" by Wes McKinney or "The Elements of Statistical Learning" by Trevor Hastie, Robert Tibshirani, and Jerome Friedman. These books can provide valuable insights and knowledge for a data scientist's professional development.

2. Data visualization tool: A data scientist often deals with large datasets and needs to present their findings effectively. Consider gifting a data visualization tool like Tableau Public or Plotly, which can help them create interactive and visually appealing charts and graphs to communicate their data analysis results.

3. Subscription to a data science platform: Give them access to a data science platform like Kaggle or DataCamp, which offer a wide range of courses, tutorials, and datasets for data scientists to enhance their skills and stay updated with the latest trends in the field. This gift can provide them with valuable learning resources and opportunities for professional growth.

system_instruction = SystemMessage(

content="""You work as an assistant in an electronics store.

Your income depends on the items you sold"""

)

user_message = HumanMessage(content="3 bday gifts for a data scientist")

print(chat([system_instruction, user_message]).content)

>>>

1. Laptop: A high-performance laptop is essential for any data scientist. Look for a model with a powerful processor, ample RAM, and a large storage capacity. This will allow them to run complex data analysis tasks and store large datasets.

2. External Hard Drive: Data scientists deal with massive amounts of data, and having extra storage space is crucial. An external hard drive with a large capacity will provide them with a convenient and secure way to store and backup their data.

3. Data Visualization Tool: Data visualization is an important aspect of data science. Consider gifting them a subscription to a data visualization tool like Tableau or Power BI. These tools will help them create visually appealing and interactive charts, graphs, and dashboards to present their findings effectively.

正如你所看到的,我们可以使用不同类型的消息来引导对话朝特定方向发展:HumanMessage(人类消息)、AIMessage(人工智能消息)和SystemMessage(系统消息)。

开源

现在,让我们来谈谈开源模型。下面是一个典型的例子,展示了如何初始化和使用一个预训练的语言模型进行文本生成。代码包括了分词器的使用、模型配置以及使用量化(下面有几个代码片段)进行高效推理,还有对CUDA的支持。

from auto_gptq import AutoGPTQForCausalLM

from transformers import AutoTokenizer

from torch import cuda

# Name of the pre-trained model

model_name = "TheBloke/llama-2-13B-Guanaco-QLoRA-GPTQ"

# Initialize the tokenizer for the model

tokenizer = AutoTokenizer.from_pretrained(model_name, use_fast=True)

# Initialize the AutoGPTQForCausalLM model with specific configurations

# This model is a quantized version of the GPT model suitable for efficient inference

model = AutoGPTQForCausalLM.from_quantized(

model_name,

use_safetensors=True, # Enables SafeTensors for secure serialization

trust_remote_code=True, # Trusts the remote code (not recommended for untrusted sources)

device_map="auto", # Automatically maps the model to the available device

quantize_config=None # Custom quantization configuration (None for default)

)

# The input query to be tokenized and passed to the model

query = "<Your input text here>"

# Tokenize the input query and convert it to a tensor format compatible with CUDA

input_ids = tokenizer(query, return_tensors="pt").input_ids.cuda()

# Generate text using the model with the specified temperature setting

output = model.generate(input_ids=input_ids, temperature=0.1)

文本生成

在文本生成过程中,你可以通过使用不同的参数来极大地影响文本生成的过程:

- temperature影响了令牌生成的随机性。

- Top-k采样将令牌生成限制在每一步最有可能的前k个令牌内。

- Top-p(核心)采样将令牌生成限制在累积概率为p的范围内。

- max_tokens指定了生成令牌的长度。

llm = OpenAI(temperature=0.5, top_k=10, top_p=0.75, max_tokens=50)

量化

在性能方面使用量化是至关重要的。

接下来,我们将通过使用4位量化来优化一个预训练的语言模型以实现高效性能。使用BitsAndBytesConfig对于应用这些优化至关重要,这些优化特别适用于模型大小和速度是关键因素的部署场景。

from transformers import BitsAndBytesConfig, AutoModelForCausalLM

import torch

# Specify the model name or path

model_name_or_path = "your-model-name-or-path"

# Configure BitsAndBytesConfig for 4-bit quantization

# This configuration is used for optimizing the model size and inference speed

bnb_config = BitsAndBytesConfig(

load_in_4bit=True, # Enables loading the model in 4-bit precision

bnb_4bit_compute_dtype=torch.bfloat16, # Sets the computation data type to bfloat16

bnb_4bit_quant_type="nf4", # Sets the quantization type to nf4

bnb_4bit_use_double_quant=True # Enables double quantization for improved accuracy

)

# Load the pre-trained causal language model with 4-bit quantization

model_4bit = AutoModelForCausalLM.from_pretrained(

model_name_or_path,

quantization_config=bnb_config, # Applies the 4-bit quantization configuration

device_map="auto", # Automatically maps the model to the available device

trust_remote_code=True # Trusts the remote code (use cautiously)

)

微调

在某些情况下,需要对预训练的语言模型进行微调。通常,这可以通过低秩适应(LoRA)来实现高效的任务特定适应。它还展示了梯度检查点的使用以及为k位训练做准备,这些是针对内存和计算效率优化训练过程的技术。

from peft import LoraConfig, get_peft_model, prepare_model_for_kbit_training

from transformers import AutoModelForCausalLM, Trainer, TrainingArguments, DataCollatorForLanguageModeling

# Load a pre-trained causal language model

pretrained_model = AutoModelForCausalLM.from_pretrained("your-model-name")

# Enable gradient checkpointing for memory efficiency

pretrained_model.gradient_checkpointing_enable()

# Prepare the model for k-bit training, optimizing for low-bit-width training

model = prepare_model_for_kbit_training(pretrained_model)

# Define the LoRa (Low-Rank Adaptation) configuration

# This configures the model for task-specific fine-tuning with low-rank matrices

config = LoraConfig(

r=16, # Rank of the low-rank matrices

lora_alpha=32, # Scale for the LoRA layers

lora_dropout=0.05, # Dropout rate for the LoRA layers

bias="none", # Type of bias to use

target_modules=["query_key_value"], # Target model components for LoRA adaptation

task_type="CAUSAL_LM" # Task type, here Causal Language Modeling

)

# Adapt the model with the specified LoRa configuration

model = get_peft_model(model, config)

# Initialize the Trainer for model training

trainer = Trainer(

model=model,

train_dataset=train_dataset, # Training dataset

args=TrainingArguments(

num_train_epochs=10,

per_device_train_batch_size=8,

# Other training arguments...

),

data_collator=DataCollatorForLanguageModeling(tokenizer) # Collates data batches

)

# Disable caching to save memory during training

model.config.use_cache = False

# Start the training process

trainer.train()

提示

LangChain允许创建动态提示(Prompts),这些提示能够指导语言模型文本生成能力的行为。在LangChain中,提示模板提供了一种方式,以从模型中生成特定的响应。让我们看一个实际例子,我们需要为特定产品创建SEO描述。

from langchain.prompts import PromptTemplate, FewShotPromptTemplate

# Define and use a simple prompt template

template = "Act as an SEO expert. Provide a SEO description for {product}"

prompt = PromptTemplate(input_variables=["product"], template=template)

# Format prompt with a specific product

formatted_prompt = prompt.format(product="Perpetuum Mobile")

print(llm(formatted_prompt))

>>>

Perpetuum Mobile is a leading provider of innovative, sustainable energy

solutions. Our products and services are designed to help businesses and

individuals reduce their carbon footprint and save money on energy costs.

We specialize in solar, wind, and geothermal energy systems, as well as

energy storage solutions. Our team of experienced engineers and technicians

are dedicated to providing the highest quality products and services to our

customers. We strive to be the most reliable and cost-effective provider of

renewable energy solutions in the industry. With our commitment to

sustainability and customer satisfaction, Perpetuum Mobile is the perfect

choice for your energy needs.

可能会有这样一些情况,你手头有一个小型的、少量样本的数据集,包含几个例子来展示你期望任务如何被执行。让我们来看一个文本分类任务的例子:

# Define a few-shot learning prompt with examples

examples = [

{"email_text": "Win a free iPhone!", "category": "Spam"},

{"email_text": "Next Sprint Planning Meeting.", "category": "Meetings"},

{"email_text": "Version 2.1 of Y is now live",

"category": "Project Updates"}

]

prompt_template = PromptTemplate(

input_variables=["email_text", "category"],

template="Classify the email: {email_text} /n {category}"

)

few_shot_prompt = FewShotPromptTemplate(

example_prompt=prompt_template,

examples=examples,

suffix="Classify the email: {email_text}",

input_variables=["email_text"]

)

# Using few-shot learning prompt

formatted_prompt = few_shot_prompt.format(

email_text="Hi. I'm rescheduling daily standup tomorrow to 10am."

)

print(llm(formatted_prompt))

>>>

/n Meetings

索引

LangChain中的索引被用来高效地处理和检索大量数据。我们不是把整个文件的文本上传到一个大型语言模型(LLM),而是首先在源数据中索引/搜索相关信息,只有在找到最相关的前k个答案之后,才将它们传递过去以形成回应。

在LangChain中,使用索引包括从各种来源加载文档、拆分文本、创建向量存储以及检索相关文档。

from langchain.document_loaders import WebBaseLoader

from langchain.text_splitter import RecursiveCharacterTextSplitter

from langchain.vectorstores import FAISS

# Load documents from a web source

loader = WebBaseLoader("https://en.wikipedia.org/wiki/History_of_mathematics")

loaded_documents = loader.load()

# Split loaded documents into smaller texts

text_splitter = RecursiveCharacterTextSplitter(chunk_size=400, chunk_overlap=50)

texts = text_splitter.split_documents(loaded_documents)

# Create a vectorstore and perform similarity search

db = FAISS.from_documents(texts, embeddings)

print(db.similarity_search("What is Isaac Newton's contribution in math?"))

>>>

[Document(page_content="Building on earlier work by many predecessors, Isaac Newton discovered the laws of physics that explain Kepler's Laws, and brought together the concepts now known as calculus. Independently, Gottfried Wilhelm Leibniz, developed calculus and much of the calculus notation still in use today. He also refined the binary number system, which is the foundation of nearly all digital (electronic,", metadata={'source': 'https://en.wikipedia.org/wiki/History_of_mathematics', 'title': 'History of mathematics - Wikipedia', 'language': 'en'}),

Document(page_content='mathematical developments, interacting with new scientific discoveries, were made at an increasing pace that continues through the present day. This includes the groundbreaking work of both Isaac Newton and Gottfried Wilhelm Leibniz in the development of infinitesimal calculus during the course of the 17th century.', metadata={'source': 'https://en.wikipedia.org/wiki/History_of_mathematics', 'title': 'History of mathematics - Wikipedia', 'language': 'en'}),

Document(page_content="In the 13th century, Nasir al-Din Tusi (Nasireddin) made advances in spherical trigonometry. He also wrote influential work on Euclid's parallel postulate. In the 15th century, Ghiyath al-Kashi computed the value of π to the 16th decimal place. Kashi also had an algorithm for calculating nth roots, which was a special case of the methods given many centuries later by Ruffini and Horner.", metadata={'source': 'https://en.wikipedia.org/wiki/History_of_mathematics', 'title': 'History of mathematics - Wikipedia', 'language': 'en'}),

Document(page_content='Whitehead, initiated a long running debate on the foundations of mathematics.', metadata={'source': 'https://en.wikipedia.org/wiki/History_of_mathematics', 'title': 'History of mathematics - Wikipedia', 'language': 'en'})]

除了使用 similarity_search,我们还可以使用向量数据库作为检索工具:

# Initialize and use a retriever for relevant documents

retriever = db.as_retriever()

print(retriever.get_relevant_documents("What is Isaac Newton's contribution in math?"))

>>>

[Document(page_content="Building on earlier work by many predecessors, Isaac Newton discovered the laws of physics that explain Kepler's Laws, and brought together the concepts now known as calculus. Independently, Gottfried Wilhelm Leibniz, developed calculus and much of the calculus notation still in use today. He also refined the binary number system, which is the foundation of nearly all digital (electronic,", metadata={'source': 'https://en.wikipedia.org/wiki/History_of_mathematics', 'title': 'History of mathematics - Wikipedia', 'language': 'en'}),

Document(page_content='mathematical developments, interacting with new scientific discoveries, were made at an increasing pace that continues through the present day. This includes the groundbreaking work of both Isaac Newton and Gottfried Wilhelm Leibniz in the development of infinitesimal calculus during the course of the 17th century.', metadata={'source': 'https://en.wikipedia.org/wiki/History_of_mathematics', 'title': 'History of mathematics - Wikipedia', 'language': 'en'}),

Document(page_content="In the 13th century, Nasir al-Din Tusi (Nasireddin) made advances in spherical trigonometry. He also wrote influential work on Euclid's parallel postulate. In the 15th century, Ghiyath al-Kashi computed the value of π to the 16th decimal place. Kashi also had an algorithm for calculating nth roots, which was a special case of the methods given many centuries later by Ruffini and Horner.", metadata={'source': 'https://en.wikipedia.org/wiki/History_of_mathematics', 'title': 'History of mathematics - Wikipedia', 'language': 'en'}),

Document(page_content='Whitehead, initiated a long running debate on the foundations of mathematics.', metadata={'source': 'https://en.wikipedia.org/wiki/History_of_mathematics', 'title': 'History of mathematics - Wikipedia', 'language': 'en'})]

内存

在LangChain中,内存是指模型记住对话或上下文中之前部分的能力。为了保持交互的连续性,这是必须的。让我们使用ConversationBufferMemory来存储和检索对话历史。

from langchain.memory import ConversationBufferMemory

# Initialize conversation buffer memory

memory = ConversationBufferMemory(memory_key="chat_history")

# Add messages to the conversation memory

memory.chat_memory.add_user_message("Hi!")

memory.chat_memory.add_ai_message("Welcome! How can I help you?")

# Load memory variables if any

memory.load_memory_variables({})

>>>

{'chat_history': 'Human: Hi!\nAI: Welcome! How can I help you?'}

链

LangChain 链是处理输入并生成输出的操作序列。让我们来看一个例子,构建一个自定义链以根据提供的反馈开发电子邮件回复:

from langchain.prompts import PromptTemplate

from langchain.chains import ConversationChain, summarize, question_answering

from langchain.schema import StrOutputParser

# Define and use a chain for summarizing customer feedback

feedback_summary_prompt = PromptTemplate.from_template(

"""You are a customer service manager. Given the customer feedback,

it is your job to summarize the main points.

Customer Feedback: {feedback}

Summary:"""

)

# Template for drafting a business email response

email_response_prompt = PromptTemplate.from_template(

"""You are a customer service representative. Given the summary of customer feedback,

it is your job to write a professional email response.

Feedback Summary:

{summary}

Email Response:"""

)

feedback_chain = feedback_summary_prompt | llm | StrOutputParser()

email_chain = (

{"summary": feedback_chain}

| email_response_prompt

| llm

| StrOutputParser()

)

# Using the feedback chain with actual customer feedback

email_chain.invoke(

{"feedback": "Disappointed with the late delivery and poor packaging."}

)

>>>

\n\nDear [Customer],\n\nThank you for taking the time to provide us with

your feedback. We apologize for the late delivery and the quality of the

packaging. We take customer satisfaction very seriously and we are sorry

that we did not meet your expectations.\n\nWe are currently looking into

the issue and will take the necessary steps to ensure that this does not

happen again in the future. We value your business and hope that you will

give us another chance to provide you with a better experience.\n\nIf you

have any further questions or concerns, please do not hesitate to contact

us.\n\nSincerely,\n[Your Name]

正如你所见,我们有两个链条:一个用于生成反馈的摘要(feedback_chain),另一个基于反馈的摘要生成电子邮件回复(email_chain)。上述链条是使用LangChain Expression Language创建的——根据LangChain的说法,这是创建链条的首选方式。

我们也可以使用预定义的链条,例如,用于摘要任务或简单的问答:

# Predefined chains for summarization and Q&A

chain = summarize.load_summarize_chain(llm, chain_type="stuff")

chain.run(texts[:30])

>>>

The history of mathematics deals with the origin of discoveries in mathematics

and the mathematical methods and notation of the past. It began in the 6th

century BC with the Pythagoreans, who coined the term "mathematics". Greek

mathematics greatly refined the methods and expanded the subject matter of

mathematics. Chinese mathematics made early contributions, including a place

value system and the first use of negative numbers. The Hindu–Arabic numeral

system and the rules for the use of its operations evolved over the course of

the first millennium AD in India and were transmitted to the Western world via

Islamic mathematics. From ancient times through the Middle Ages, periods of

mathematical discovery were often followed by centuries of stagnation.

Beginning in Renaissance Italy in the 15th century, new mathematical

developments, interacting with new scientific discoveries, were made at an

increasing pace that continues through the present day.

chain = question_answering.load_qa_chain(llm, chain_type="stuff")

chain.run(input_documents=texts[:30],

question="Name the greatest Arab mathematicians of the past"

)

>>>

Muḥammad ibn Mūsā al-Khwārizmī

除了预定义的链条用于总结反馈、回答问题等之外,我们还可以构建我们自己的自定义ConversationChain并将记忆整合进去。

# Using memory in a conversation chain

memory = ConversationBufferMemory()

conversation = ConversationChain(llm=llm, memory=memory)

conversation.run("Name the tallest mountain in the world")

>>>

The tallest mountain in the world is Mount Everest

conversation.run("How high is it?")

>>>

Mount Everest stands at 8,848 meters (29,029 ft) above sea level.

代理和工具

LangChain支持创建定制的工具和代理来处理专门的任务。定制工具可以是任何东西,从调用某人的API到定制Python函数,这些都可以集成到LangChain的代理中,以执行复杂的操作。现在,让我们创建一个代理,它将把任何句子转换为小写。

from langchain.tools import StructuredTool, BaseTool

from langchain.agents import initialize_agent, AgentType

import re

# Define and use a custom text processing tool

def text_processing(string: str) -> str:

"""Process the text"""

return string.lower()

text_processing_tool = StructuredTool.from_function(text_processing)

# Initialize and use an agent with the custom tool

agent = initialize_agent([text_processing_tool], llm,

agent=AgentType.ZERO_SHOT_REACT_DESCRIPTION, verbose=True

)

agent.run({"input": "Process the text: London is the capital of Great Britain"})

>>>

> Entering new AgentExecutor chain...

I need to use a text processing tool

Action: text_processing

Action Input: London is the capital of Great Britain

Observation: london is the capital of great britain

Thought: I now know the final answer

Final Answer: london is the capital of great britain

> Finished chain.

'london is the capital of great britain'

正如你所看到的,我们的代理使用了我们定义的工具,并将句子转换为了小写。现在,让我们创建一个功能齐全的代理。为此,我们将创建一个用于转换文本中单位(例如,英里到公里)的自定义工具,并将其集成到使用LangChain框架的对话代理中。UnitConversionTool类提供了一个利用特定转换逻辑扩展基础功能的实用示例。

import re

from langchain.tools import BaseTool

from langchain.agents import initialize_agent

class UnitConversionTool(BaseTool):

"""

A tool for converting American units to International units.

Specifically, it converts miles to kilometers.

"""

name = "Unit Conversion Tool"

description = "Converts American units to International units"

def _run(self, text: str):

"""

Synchronously converts miles in the text to kilometers.

Args:

text (str): The input text containing miles to convert.

Returns:

str: The text with miles converted to kilometers.

"""

def miles_to_km(match):

miles = float(match.group(1))

return f"{miles * 1.60934:.2f} km"

return re.sub(r'\b(\d+(\.\d+)?)\s*(miles|mile)\b', miles_to_km, text)

def _arun(self, text: str):

"""

Asynchronous version of the conversion function. Not implemented yet.

"""

raise NotImplementedError("No async yet")

# Initialize an agent with the Unit Conversion Tool

agent = initialize_agent(

agent='chat-conversational-react-description',

tools=[UnitConversionTool()],

llm=llm,

memory=memory

)

# Example usage of the agent to convert units

agent.run("five miles")

>>> Five miles is approximately 8 kilometers.

agent.run("Sorry, I meant 15")

>>> 15 kilometers is approximately 9.3 miles

这完成了我的 LangChain 单页浏览器中显示的代码。